Building Better Personal Software

Taking an AI-led coding practice from 'vibe code' to 'good software'.

The AI coding landscape has moved so quickly over the past 2 years that as I sit down to write this, I find myself having trouble pinpointing specific timelines as to how my personal practice has developed. Sometime in 2023/24 I started building neat little productivity tools, which got better and better over time, and then in early 2025 I switched to Claude Code, and it's been an absolute blur since then.

The release of Sonnet 3.7 (Feb 2025) felt like a turning point - all of a sudden, I felt like I had a coding buddy who genuinely earned my trust, and whose output was so good that I felt confident enough sharing it with other people. Claude Code hit GA in late May 2025, Opus 4 was released alongside it, and I started working on bigger challenges in longer sessions.

One of the projects I tried tackling in the summer of 2025 was replacing my process of designing workflows and seeing if I could cut down what was sometimes a multi-day endeavour into something vastly quicker and more structured - as well as bringing it into an AI-native world.

My first pass was a good proof of concept, but the models weren't ready yet to work in the way I needed them to work. I'm not a developer; I'm a technically capable product owner. I needed a dev team -- and I got one, when Opus 4.5 released.

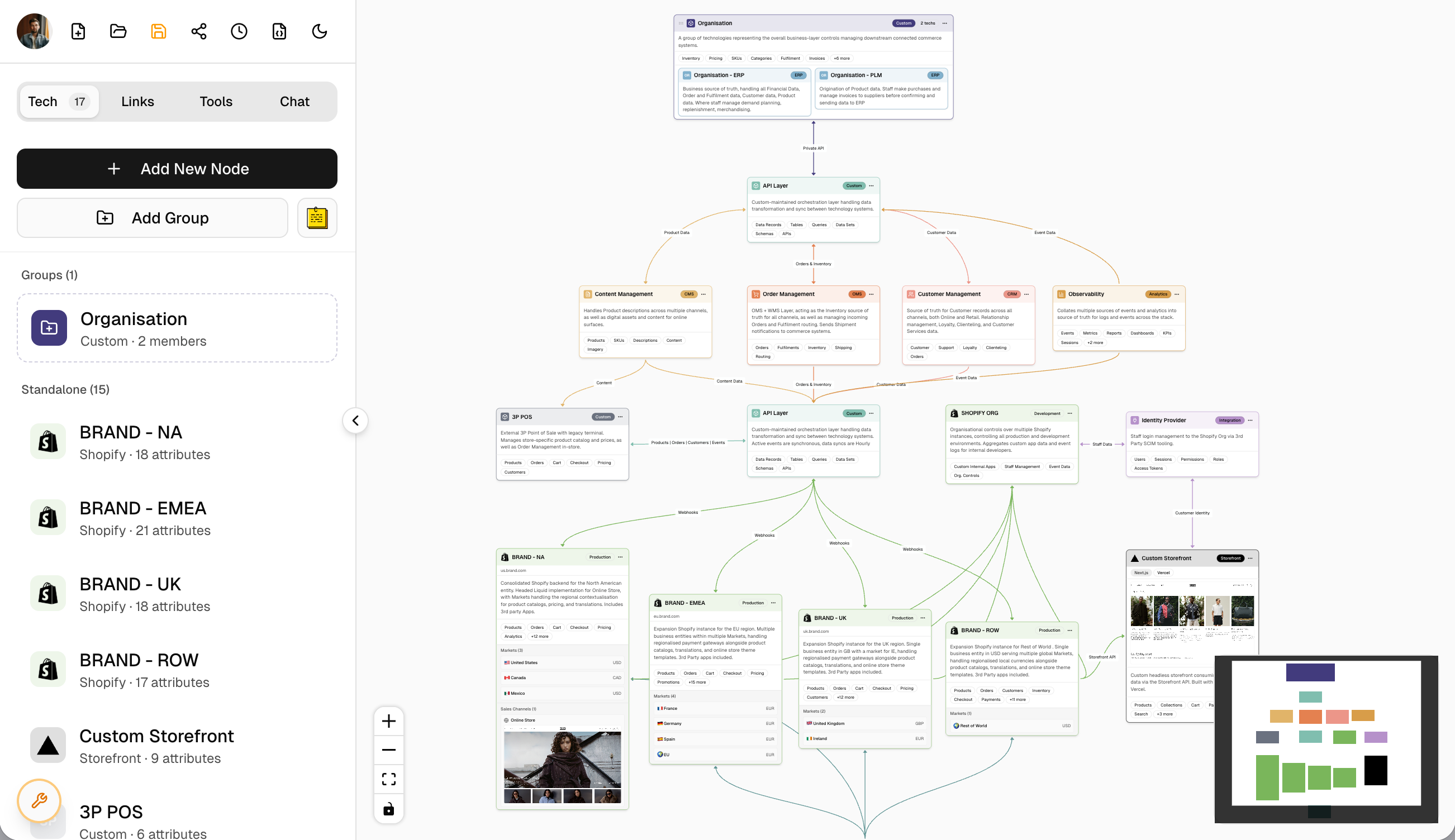

So I started working on this personal app:

(This is a generalised view of a standard Shopify tech stack - not representative of any merchant in particular, and contains no proprietary non-public information.)

I'm not going to get into exactly what it is or what it does. I want to focus more on what it took to get it to it's current state of 'everyday software I actually enjoy using'.

-

It's a visual editor that renders structured JSON data models in a beautiful, clean, organised way that matches my own aesthetic. Anyone can use it and expect consistent visual results.

-

Branding and data attributes for a multitude of technologies is handled automatically. The app knows it's purpose and has a brain that is tuned to think like a Solutions Architect.

-

Contextual understanding of Shopify and technology is baked-in. It knows how to model Shopify stores given their configuration, and it priortises Shopify data as a first-class passenger.

-

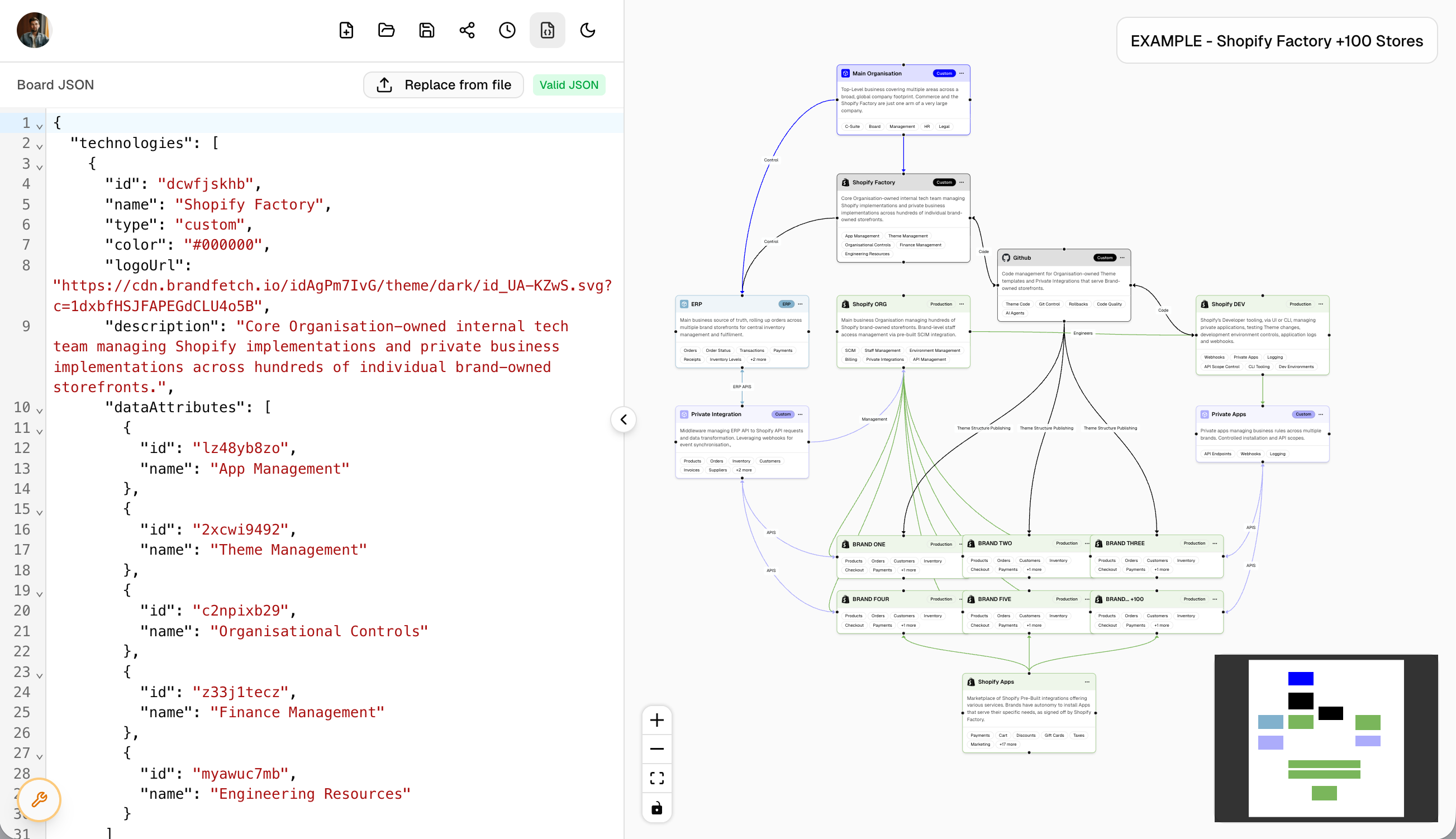

Everything is does is AI-centric. It can parse .md files and extract relevant architectural information to automatically build a board, or you can work with an agent to build the JSON data structure that underpins a board and upload that directly. Exporting supports both .md and .json formats as well, to feed a board's context to Agents easily.

(Again, simply a demonstrative generalised example architecture showing a typical multi-store CI/CD workflow)

A few things had to change about me as a person to get to this current stage of the project.

Actually Plan Something Out

Listen, I like to get things done. I'm self aware enough that I know that can sometimes come at the expense of sitting down and thinking ahead for a while. I'm getting better, and for this project I did it the best I've ever done (so far!).

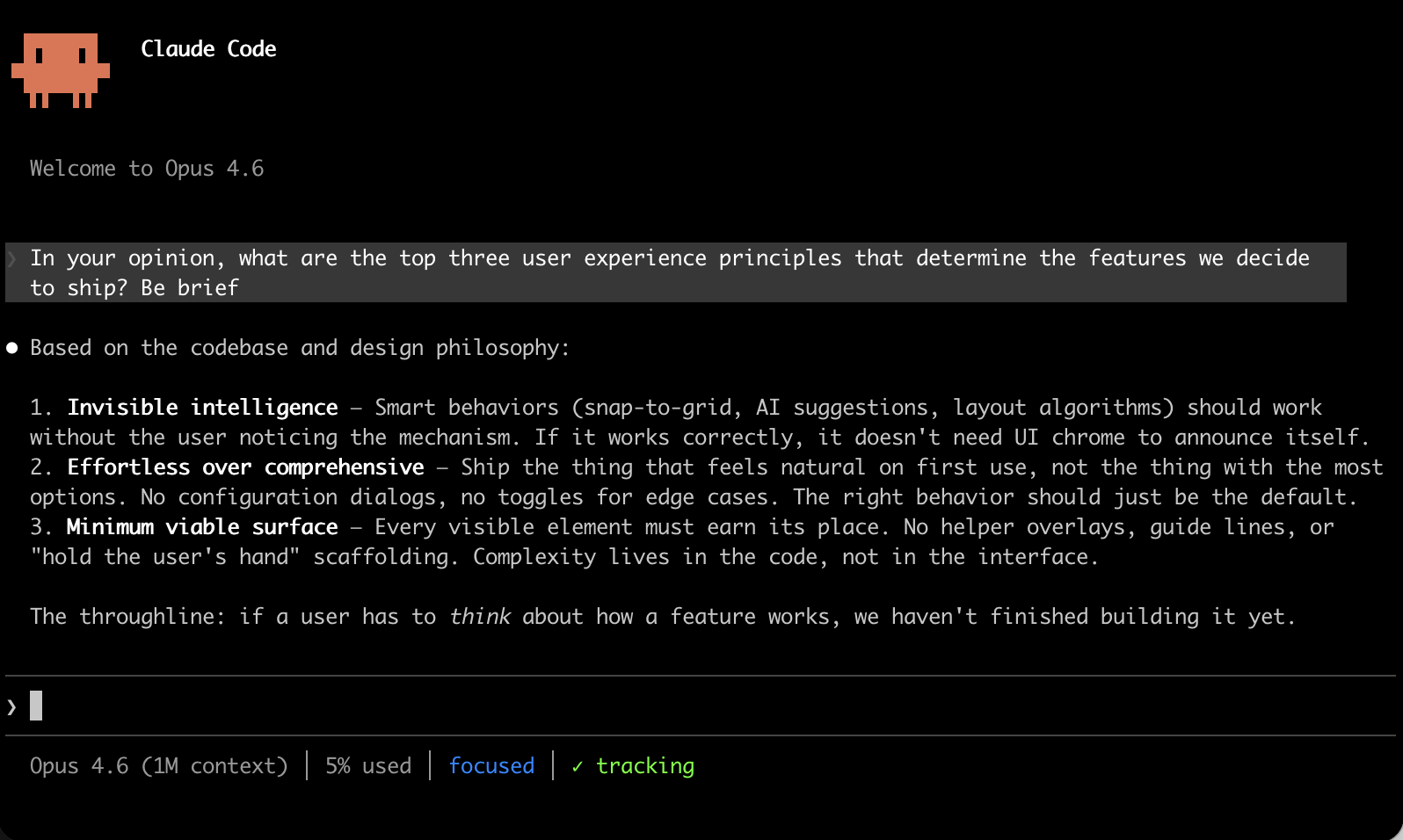

Acting as a Product Owner, I developed an 'Engineering Manager' agent and wrote it to the main claude.md file in my project. We then worked together over the course of a few days setting out product principles, comprehensive user journeys, desired workstreams, and a loose product roadmap. Some of the priciples we comprehensively outlined and set development rules against were:

- The tool needs to know what it is for, with an internal intelligence, and understand what it was designed to accomplish.

- It should be an indispensable companion that users feel connected to because it helps simplify complexity.

- It should feel like magic. Things snap into place, beautiful results are consistent, smart suggestions are made, and everything just seems to be easily accessible.

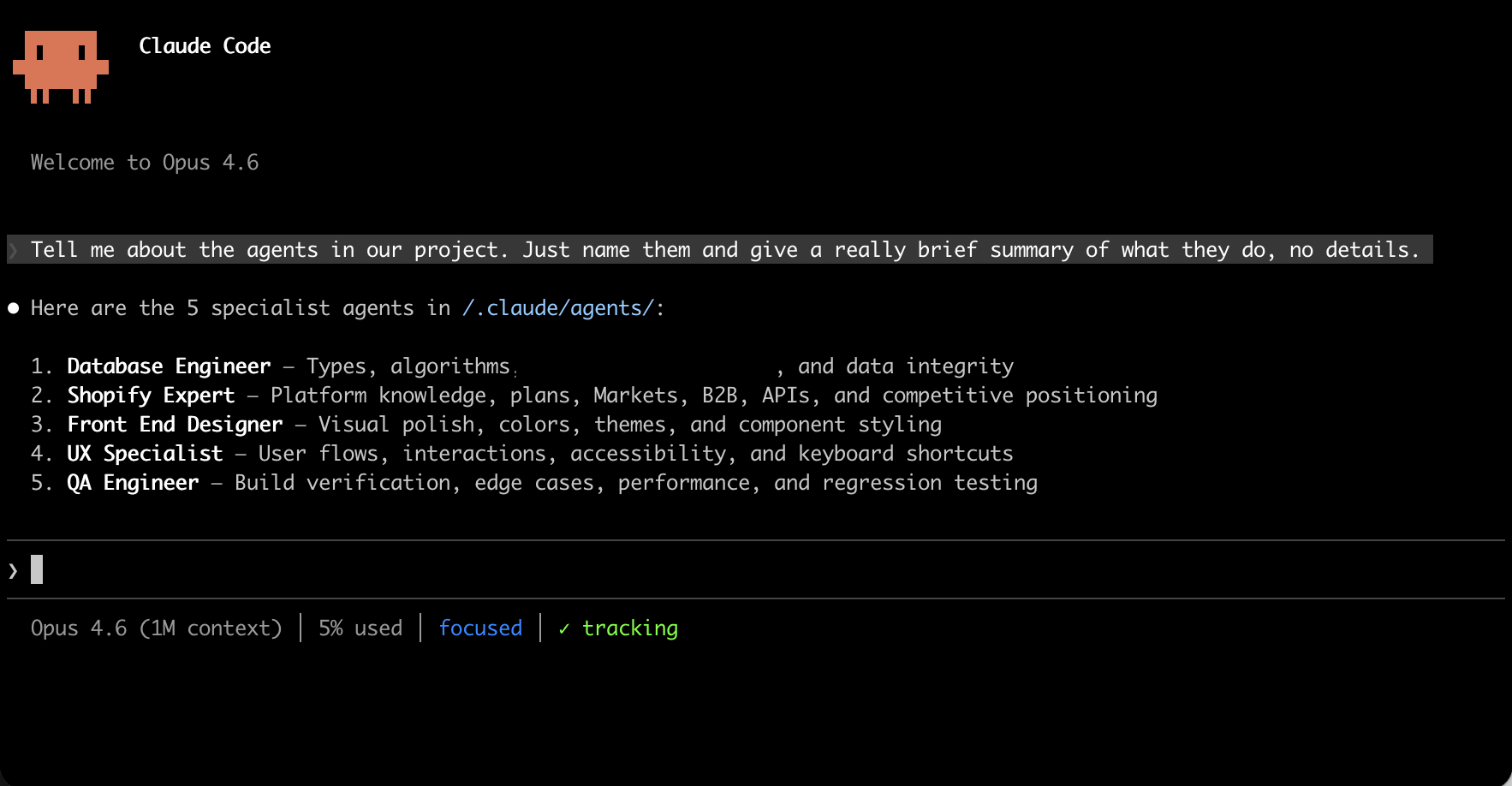

It formed the foundational base of our shared understanding. Then, we set about building a team of sub-agents with specialised skills; a database engineer, a shopify expert, a front end designer, a UX specialist, and a QA engineer.

Every time I sit down to work on the project, I have a standup with my Engineering Manager Agent and plan out the sprint's features and dependencies. Claude Code's 'planning mode' is amazing for this, and has vastly improved long-form sessions. Before the agent has a chance to get 'dumb' in the 60-70% context window area, we'll start a new plan and clear context to work on the next feature.

Intentionality Along the Build Process

One of the biggest mistakes I've seen in the experimentation phase that kills productivity is bloating the project with the fanciest, newest thing that nobody was looking for, essentially introducing solutions to things that weren't problems. Don't pull data from my email and calendar into a dashboard in a new tab and tell me it's a 'productivity tool'. Do something difficult that helps me.

To that end, I took the approach of being the absolute arbiter of what makes it into my project and what doesn't. I solicited user feedback, and accepted maybe 40% of it and tabled the rest - keeping the intent of the feedback, and discarding the suggestions on how exactly it should work. This is where refering back to the product principles really helps ground your ideas.

For example, 'sharing' was the most obivous improvement feedback in the Alpha development phase. My team wanted a way to share boards with others so that collaborators could see them and interact with them. It took me a few days to plan it out;

- Should shared boards that are 'view only' allow exporting?

- If User A on the board makes a change and User B hits 'cmd-z' to undo the change... what happens?

- What's the appropriate user experience of being removed as a collaborator if they're actively editing a board?

... and more and more. These are the small things that an AI agent is not yet very good at determining. You actually need to think at a human level about what people do, and people are uncannily creative in the way they can break things.

Bad Input Equals Bad Output

This sounds so simple, but sometimes AI feels so magic now that it can be missed when scaffolding a project. Sure, an Agent with browser or MCP access can do a lot of heavy lifting (if it's asked to) to inform itself while it's developing a solution.

However in my experience, taking a few hours to first generate a really solid collection of research files within the project itself is critical to efficiently building something with an agentic team. They can reference those files or Skills, and if they don't find what they need, then they can use the tools at their disposal to go find out what they need to know.

Something I did recently to make building research more fun was spin up a quick 'quiz' to personally explore the resulting files a swarm of agents generated. Instead of reading them all, I asked my agentic team to come up with trivia questions related to their findings, and I generated a scene in which to interact with the quiz.

This essentially allowed me to spot-check the research was factual without dryly reading a bunch of boring details I don't really need to know. As long as I could feel confident feeding it to an agent as context, I was happy.

Stop Using AI as a Better Google

I'll wrap this up here - an article that was deeply affecting to me was this post by Matt Schumer in which he accurately describes why "nothing that can be done on a computer is safe in the medium term".

When I started asking the agents I was working with harder questions, I started getting more used to what they needed from me to truly succeed at what we were trageting. It was a constant improvement feedback loop with something that never gets tired, or uninterested, or frustrated.

The upside is high if you just ask questions and let yourself be humbled that we're not power users of the computer anymore. The computers know what they're doing now - they can have it.

I'll spend my time imaging harder problems to solve.

✌️